New Economics of the Agentic Firm

- olivermorris83

- Mar 18

- 10 min read

Updated: Mar 19

Inside the firm the supply of intelligence is changing. What used to be scarce is becoming abundant. What used to sit quietly in the background is becoming the bottleneck. And the management model built for human-paced knowledge work is starting to break.

That is the real significance of AI agents.

This is not just a better tool for producing files, reports, code, or drafts. It is a new unit of production. And when the unit of production changes, the economics of the company change with it.

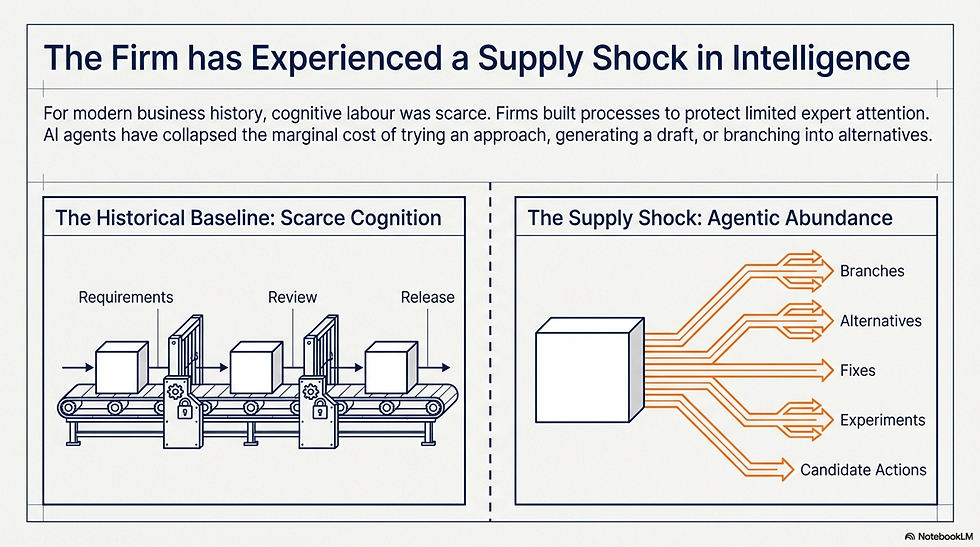

For most of modern business history, cognitive labour was scarce. Expert attention was limited. Analysis took time. Producing a first version of anything meaningful was expensive. So firms built processes to protect scarce human effort. Requirements came before execution. Review came before release. Managers allocated limited expert time carefully.

Agents change that equation. The marginal cost of trying an approach, branching into alternatives, or producing another version has collapsed.

This is a supply shock in intelligence. And whenever supply changes sharply, scarcity moves elsewhere.

Scarcity has moved, what remains scarce inside the agentic firm is not output. It is:

available context

judgment

review capacity

coordination

trust

decision rights

accountable ownership

That is the new economics. When intelligence gets cheap, context becomes capital.

By context, I do not just mean a longer prompt or better retrieval. I mean the operating knowledge that makes action useful inside a real business: priorities, constraints, exceptions, risk tolerances, commercial logic, regulatory nuance, internal standards, and the tacit understanding of how things are actually done. Some of that lives in documents. Much of it lives in people, habits, scars, and unspoken norms.

Agents can only act as well as the context they are given or can reliably retrieve. That is why context engineering matters. Not as a prompt-writing trick, but as the discipline of building the informational infrastructure that lets synthetic intelligence act coherently inside the firm. More on this later.

I have felt this directly in my two years working with AI agents. The productivity gain is real. So is the management burden.

Agents never sleep. They never get tired. They never decide the team has already seen enough for one day. They generate branches, alternatives, fixes, experiments, and candidate actions continuously. That sounds like pure upside until you meet the human bottleneck on the other side.

Burnout is a real risk.

Not because the AI is struggling, but because the humans are drowning in output. Cheap cognition creates expensive management. As generation becomes abundant, review becomes the constraint. The old assumption was that production was slower than oversight. That is no longer safe.

If agents can produce far more code changes, drafts, analyses, or candidate actions than humans can inspect one by one, then “review harder” is not an operating model. Oversight has its own failure mode, one we have seen before in semi-autonomous driving: vigilance decay.

The same dynamic is emerging with agents. When output is continuous, fluent, and well-formatted, reviewers habituate. They scan rather than read. They approve rather than judge.

There is a second, quieter injury running alongside the cognitive one. As employees collaborate more with agents and less with each other, research is measuring a rise in workplace loneliness and emotional fatigue.

The texture of work changes: fewer social exchanges, less informal coordination, more solitary task execution alongside a machine that never tires. Studies are linking this relational thinning to counterproductive work behaviour — disengagement and in some cases deliberate errors.

There is a limited analogy here with AI itself. In a world of scarce compute, AI systems had to be handcrafted. Engineers stayed close to the rules, the architecture, the moving parts. But the bitter lesson was that once enough compute arrived, grown systems beat handcrafted ones. Scale outperformed local legibility.

Something similar may now be happening in knowledge work.

When output was scarce, managers could stay close to every line. They could read every document, inspect every change, shape every draft. Supervision was artisanal.

As agentic output becomes abundant, that breaks. We move from craft supervision to statistical management.

That means less confidence that any human will understand every local act of production. More emphasis on whether the system performs reliably enough overall. Less line-by-line inspection. More thresholds, monitoring, sampling, and exceptions. We understand less of each individual act. We govern more of the system.

In that sense, we are all a little more C-suite now.

The work shifts upward. Fewer people are directly crafting every output. More people are defining thresholds, deciding what counts as acceptable performance, and carrying responsibility for systems they cannot inspect exhaustively.

But this shift does not distribute gains evenly. Research in the Journal of Political Economy finds that autonomous AI primarily benefits the most knowledgeable individuals in a firm's hierarchy, while less autonomous AI tends to benefit those lower down. University of Chicago Press

The uncomfortable implication: as agents become more autonomous, the knowledge hierarchy inside the firm may steepen rather than flatten. There is also a pipeline risk that leaders are only beginning to notice. Automating entry-level cognitive work efficiently may hollow out the talent development path for mid- and senior roles Fortune, the very roles responsible for supplying the judgment, context, and accountability your agentic system depends on.

This is where output evaluations (aka evals) come in. Context tells the agent what world it is operating in. Evals tell us whether it is operating well enough. Both matter and both are a lot of work.

The Unit of Work Has Changed

Andrej Karpathy claims the unit of work is becoming the agent, not the file. That has deeper consequences than it first appears.

There is an analogy from software development. In the early years of the web each page was a fixed document of HTML. These days each page is actually a function, a mini code factory which assembles the page for each reader, tailored if necessary. The unit of production shifted from the static file to the dynamic function. And everything had to change in order to feed this new throughput:

Content management systems

Separated content from presentation

Caching layers

Decided which outputs could be reused and which had to be fresh

Content delivery networks

Pushed assets to the edge, because latency matters when assembling in real time

Load balancers

Ensured no single failure brought down the whole system.

Monitoring

Tracked performance continuously, because when output is generated dynamically, failure is not a broken file, it is a silent malfunction serving errors far and wide

Each of these layers has a direct parallel in the agentic firm.

Content management -> Context management

Extracting business knowledge from the heads of experts and the depths of policy documents and making it structured, retrievable, and version-controlled.

Caching -> Institutional memory

Agents often need the organisational equivalent of a cached response: a known-good answer, a precedent, a pre-approved template.

Content delivery -> Context delivery

The minimum viable context, scoped for the task at hand. Assembling the right subset of organisational knowledge for the right agent at the right moment.

Load balancing -> Orchestration

Routing tasks to the right agents, managing parallel workstreams, ensuring one overloaded review queue does not stall an entire pipeline.

Monitoring -> Evaluation

Continuous assessment of generated outputs. You do not review every draft. You build evals, define thresholds, sample systematically, and investigate exceptions.

See the full list here

The transition from static to dynamic created a long, messy middle period, sometimes called "tag soup", where half-static, half-dynamic systems were stitched together with brittle integrations and technical debt.

Most companies adopting agents today are in their own tag soup phase. Some workflows are agentic. Some are manual. Some are awkwardly hybrid. This is not a failure of ambition. It is the natural architecture of a transition that has not yet found its standards.

The web matured because the industry converged on shared infrastructure: HTTP, REST APIs, CDN protocols, container orchestration, observability standards. The agentic firm will need its own convergence — on context formats, evaluation frameworks, delegation protocols, and accountability structures — before the full productivity gains arrive.

We are on the way with MCP, A2A, A2P etc. The firms that won were not the ones that produced the most pages. They were the ones that built the best systems for assembling, delivering, monitoring, and governing dynamic output at scale. The same will be true of agents.

Humans In The System

For example, when each capable worker is effectively already paired with AI, the structure of teamwork starts to change. In software, I notice less pair coding between humans because each coder is already in a pair with an AI. Work splits into more modular chunks. Projects start to look more like prefab assembly: separately generated components integrated through interfaces, tests, and constraints.

The same pattern is likely to spread elsewhere. Research, drafting, reporting, analysis, internal operations, and customer workflows can all become more parallel, more modular, and more assembly-oriented.

That does not eliminate coordination costs. It moves them.

A useful analogy here is containerisation in shipping. Containers did not simply make ports a bit faster. They introduced a new unit of production and forced the surrounding system to change: ports, cranes, warehousing, scheduling, inland transport, and capital investment. The winners were not simply the firms that bought containers. They were the ones that redesigned operations around them.

Agents may do something similar in knowledge work. They are not just faster assistants. They are a new unit of production. And once that unit arrives, the bottlenecks move: away from drafting and toward coordination, observability, evaluation, and context supply.

That is where many firms are still underestimating the challenge.

The modularity that agents enable carries its own failure mode, and it's worth naming directly.

When teams build agents independently, without a unifying architecture, the result is sprawl — a proliferating tangle of siloed, duplicative, often insecure agents that each solve a local problem while collectively producing incoherence.

Individual wins mask systemic fragility. Technical debt accumulates invisibly. Each agent acting independently, driven by local optimisation goals, introduces a degree of randomness and disorder — a gradual erosion of structure that can cause the organisation to resemble a chaotic, decentralised network with blurring boundaries. See California Management Review.

The containerisation analogy is instructive here too: ports that let every shipper design their own container format didn't win. Standardisation was the unlock — and it required deliberate, costly decisions about shared infrastructure before the efficiency gains arrived.

Context Becomes Infrastructure

If agents are the new unit of production, then context is the infrastructure that allows them to move productively through the firm.

This is why context engineering matters.

Not as a prompt-writing trick, but as the discipline of building the informational infrastructure that lets synthetic intelligence act coherently inside a real business. Priorities, constraints, exceptions, risk tolerances, commercial logic, regulatory nuance, internal standards, tool access, memory, and state all need to be available in the right form, at the right time, under the right constraints.

Eric Broda's Agentic Mesh proposes that firms need the equivalent of ports, cranes, and logistics for context: systems to extract it from operational environments, keep it fresh, compile the relevant subset for the next step, and deliver what he calls a minimum viable context. In other words, context cannot remain an artisanal activity. It has to become part of the firm’s operating infrastructure.

Prompt engineering was the prototype phase. Context engineering is the operating model.

This brings us to the most important point for leadership.

As agents become more capable, firms will increasingly delegate operational rights: the right to retrieve information, draft messages, run workflows, write code, make recommendations, or trigger actions under defined conditions.

Responsibilities will also spread. Teams will need to maintain context, thresholds, evals, escalation rules, and monitoring systems around those agents.

But accountability does not disappear. This is the trifecta that matters:

* Agents may hold more operational rights

* Teams may share more execution responsibilities

* But accountability remains with named humans

That is not a philosophical footnote. It is the core governance fact of the agentic firm. The strategic challenge is not simply adoption. It is deciding, with precision, where rights may be delegated, what responsibilities must be built around those rights, and who remains accountable when the system fails.

The New Economics of the Firm

So the internal economics of the company are shifting.

What gets cheaper:

generation

exploration

iteration

branching

first drafts

routine synthesis

execution of many cognitive sub-tasks

What remains scarce:

available context

judgment

review capacity

eval design and maintenance

trusted escalation

coordination across modules

context infrastructure

named accountability

That is the real shape of the change. The firms that thrive in this environment will not simply be those with the most agent output. They will be the ones that redesign management around abundant intelligence, limited context, and statistical control.

Because that is the new bottleneck. Not whether the machine can produce, but whether the organisation can remain coherent while it does.

It is early days and scepticism is fair, especially in large companies. MIT Nobel laureate Daron Acemoglu has written of a structural concern: that companies are building "so-so automation" — substituting human effort without creating genuinely new tasks, raising average output per worker while leaving marginal productivity flat or declining.

The macro numbers conceal two very different experiences. For large organisations, AI gains appear to be partly absorbed by the same coordination overhead they already had — agents and humans generating output that then gets routed through existing approval, review, and rework processes.

Research finds that over 40% of workers in larger firms have already encountered low-quality AI-generated output that cost nearly two hours of rework per instance, creating what researchers are calling "workslop", productivity gains from one person instantly becoming overhead for another.

For the individual operator, the freelancer, or the small tight-context team, the gain goes directly to output. This is perhaps the most honest way to read the paradox: AI is genuinely transformative at the level of the individual working with good context and high agency, and often disappointing at the level of the large firm that hasn't redesigned its coordination model.

Its a problem we first wrote about 18mths ago, comparing the arrival of agents in the workplace with electricity in the factory. Factories had to be wired for electricity to every workbench before they could yield the benefits over steam.

None of this means the structural changes described in this piece are wrong. It means they are slower, harder, and more contingent than the conference circuit suggests.

The firms that will look back and say agents transformed them are likely to be the ones that spent 2025 and 2026 doing the unglamorous work — context infrastructure, eval design, governance, accountability frameworks — while their competitors were still counting agent deployments as a measure of progress.

Comments